Containers are the lifeblood of every Docker installation. They serve as the foundation of the Docker platform and allow you to run services on your computer without worrying about dependencies and version conflicts. Here, we show you the basics of creating, managing, and customizing Docker containers using the Docker CLI tool.

Note: Get started by first install Docker on your Linux system.

How to Find and Pull a Docker Image

Docker containers are a special type of software environment that allows you to run programs separate from the rest of your original system. To achieve this, Docker uses “software images.” These are static copies of programs that serve as the base from which a container starts.

This distinction between image and container allows you to recreate and adapt your software in any way necessary. For example, you can have an image such as “httpd” but spin up two distinct containers out of it: “website1” and “website2.”

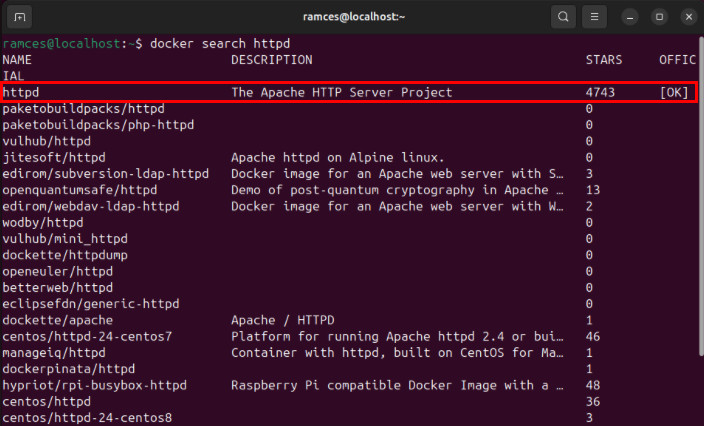

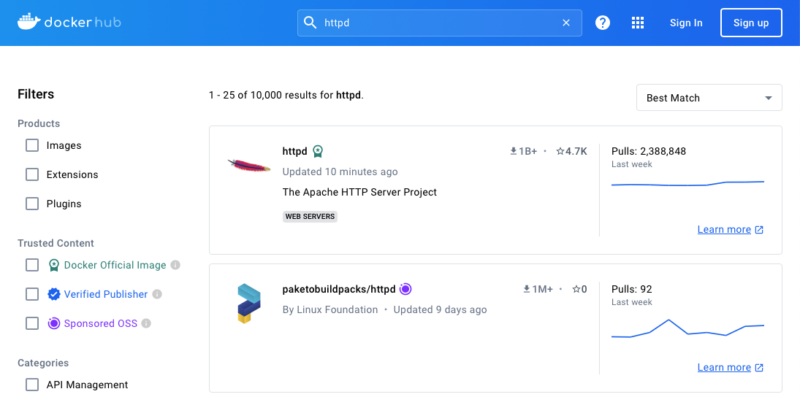

The easiest way to look a new Docker image is to use the search subcommand:

docker search httpd

You can also search for packages on the Docker Hub website if you prefer to use your web browser.

To download the image to your system, run the following command:

docker image pull httpd

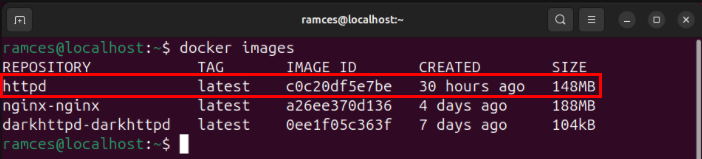

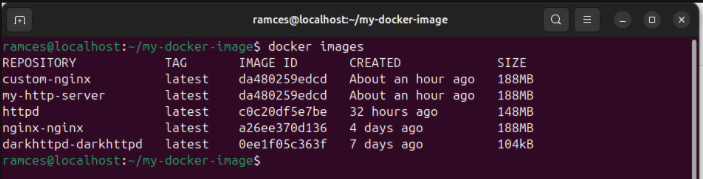

Confirm that you’ve properly added your new image to your system using the images subcommand:

docker images

Building a New Image Using Dockerfiles

Aside from pulling prebuilt images from Docker Hub, you can build images straight from the Docker CLI. This is useful if you want to either create custom versions of existing software packages or are porting new apps to Docker.

To do this, first create a folder in your home directory for your build files:

mkdir ~/my-docker-image && cd ~/my-docker-image

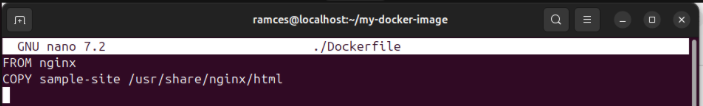

Create a new Dockerfile using your favorite text editor:

nano ./Dockerfile

Paste the following lines of code inside your new Dockerfile:

FROM nginx COPY sample-site /usr/share/nginx/html

Create a “sample-site” folder and either copy in or make a basic HTML site:

mkdir ./sample-site cp ~/index.html ./sample-site/

Save your new Dockerfile, then run the following command to build it on your system:

docker build -t custom-nginx .

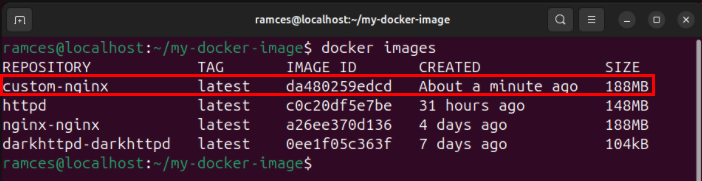

Check if your new Docker image is present in your list of Docker images:

docker images

Building a New Image Using Existing Containers

The Docker CLI tool can also build new images out of the containers that currently exist in your system. This is useful if you’re already working on an existing environment and you want to create a new image out of your current setup.

To do this, make sure that your container is not currently running:

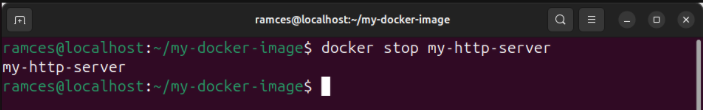

docker stop my-http-server

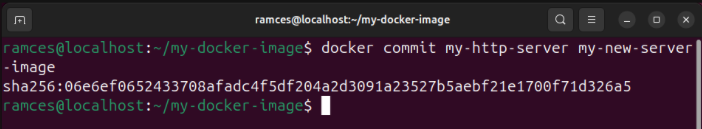

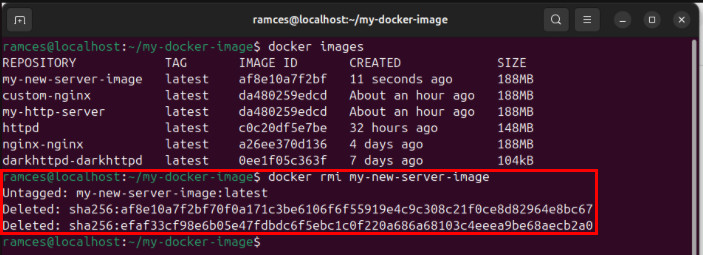

Run the commit subcommand followed by the name of your container, then provide the name of your new Docker image after that:

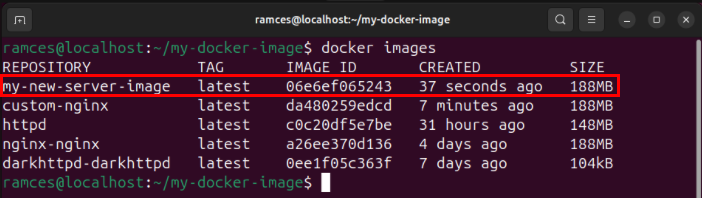

docker commit my-http-server my-new-server-image

Confirm that your new Docker image is in your system by running docker images.

How to Run and Stop a Docker Container

With your Docker image ready, you can now start using it to create your first container. To do this, use the run subcommand followed by the name of the image that you want to run:

docker run httpd

While this will work for running your first Docker container, doing it this way will take over your current shell session. To run your container on the background, append the -d flag after the run subcommand:

docker run -d httpd

The run subcommand can also take in a number of additional flags that can change the behavior of your new Docker container. For example, the --name flag allows you to add a customizable name to your container:

docker run -d --name=my-http-server httpd

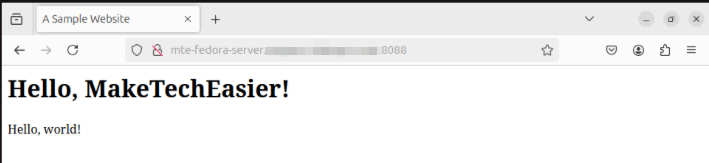

On the other hand, you can use the --publish flag to redirect the network port where you can access your Docker container. This is primarily useful if you don’t want your container to take over a privileged port:

docker run -d --name=my-http-server --publish 8080:80 httpd

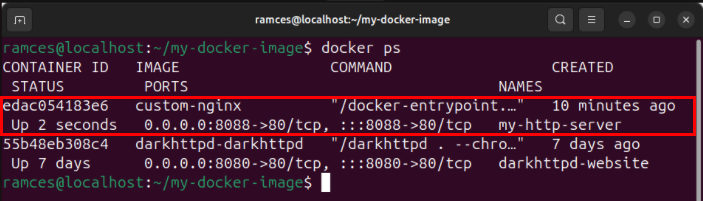

You can check all the currently running Docker containers in your system by running the following command:

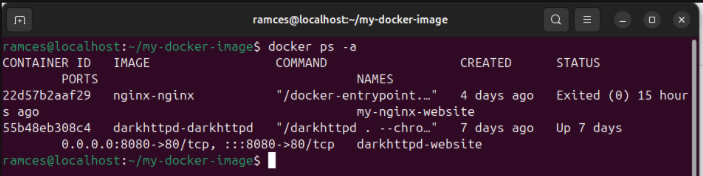

docker ps

Similar to the run subcommand, ps can also take in a handful of flags that will modify how it behaves. For instance, to view the containers that are currently down use the -a flag:

docker ps -a

To turn off a running container, use the stop subcommand followed by either the container ID or the name of your Docker container:

docker stop my-http-server

You can restart any container that you’ve stopped by rerunning the start subcommand:

docker start my-http-server

On a side note: learn the basics of web hosting with Docker by running a simple website using darkhttpd.

Pausing and Killing a Docker Container

The Docker CLI tool also allows you to temporarily pause and kill a running container process. This can be useful if you’re troubleshooting an issue with your Docker setup and you want to either isolate or stop a misbehaving container.

Start by running docker ps to list all the running containers in the system.

Find either the ID or the name of the container that you want to manage.

Run the pause subcommand followed by the name of the container that you want to temporarily suspend:

docker pause my-http-server

You can resume a suspended process by running the unpause subcommand:

docker unpause my-http-server

To stop a misbehaving process, run the kill subcommand followed by the name of your container:

docker kill my-http-server

How to Inspect a Docker Container

Knowing the intricate details of your container is a vital part of maintaining the health of your Docker stack. It allows you to quickly look at any potential issues and it can be the difference between fixing and redoing your entire deployment.

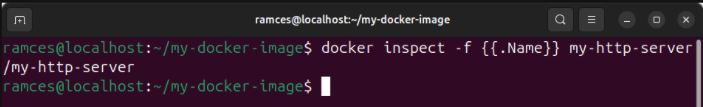

To look at an overview of your Docker container, run the inspect subcommand:

docker inspect my-http-server

Doing this will print a long JSON string that describes the current state of your entire container. You can narrow this down either by piping the output to jq or using the built-in -f flag followed by the JSON object that you want to print:

docker inspect -f {{.Name}} my-http-server

Printing Container Logs to the Terminal

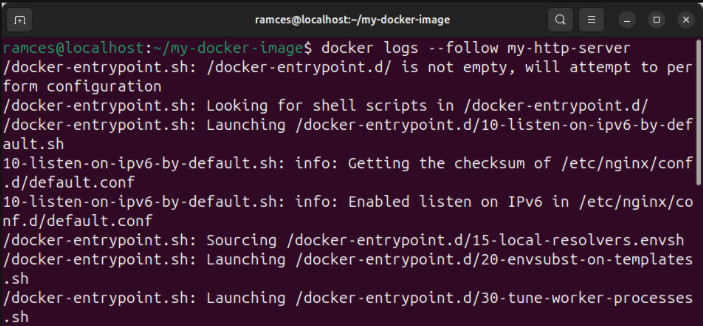

Aside from that, you can also track and print the logs of any currently running Docker container. This can be useful if you want to check how your service currently behaves and look at the output that it’s returning to STDOUT.

To do this, run the logs subcommand followed by name of your container:

docker logs my-http-server

You can also run the logs subcommand with the --follow flag to create a continuous log of your Docker service. This is similar to running tail -f at the end of a UNIX pipe:

docker logs --follow my-http-server

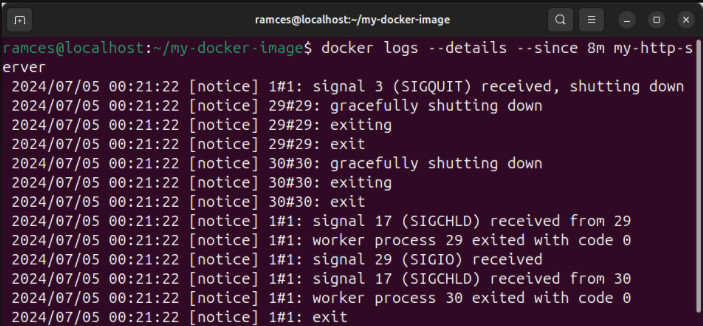

Similar to other subcommands, you can also add various flags to customize the output of your Docker container’s log. For example, the --timestamps flag adds a detailed timestamp for every message that your container send to its STDOUT:

docker logs --timestamps my-http-server

The --details flag will print even the environment variables that you’ve set for your current Docker container. Meanwhile, the --since flag allows you to only show logs that happened after a particular point in time:

docker logs --details --since 8m my-http-server

How to Customize a Docker Container

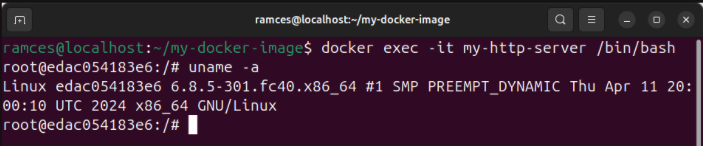

At its core, a Docker Container is a small, stripped down version of Linux running on top of your current system. This means that, similar to a virtual machine, it’s possible to access and retrieve the data inside your container.

To copy a local file from your host machine to the container, run the cp subcommand:

docker cp ~/my-file my-http-server:/tmp

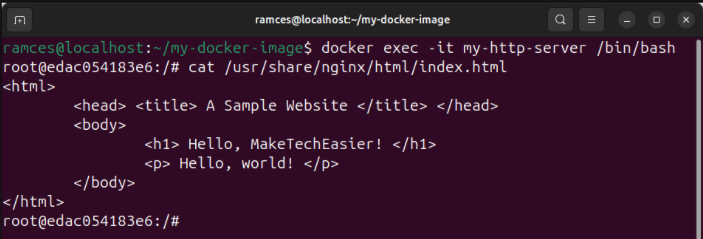

Sometimes you will also need to “step into” containers by opening a shell inside them. This way you can edit files, install binaries and customize them according to your needs:

docker exec -it my-http-server /bin/bash

Now, you could, for example, edit “index.html” and create a homepage for the website within.

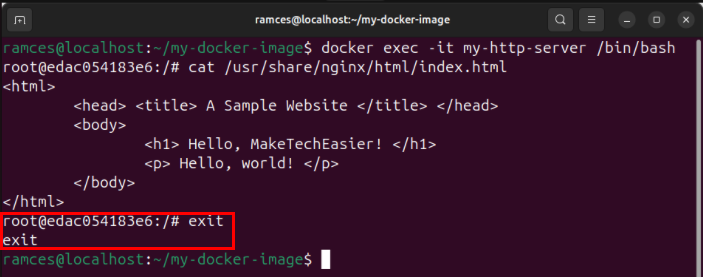

To exit the shell of the container by either pressing Ctrl + D, or running exit on the terminal.

Good to know: learn how to move an existing Docker container to a new system.

How to Delete Docker Containers and Images

Removing unused Docker Containers and Images are an important part of general housekeeping for your deployment. Doing that allows you to remove unnecessary files out of your server, saving storage space on the long run.

Before you delete a container, make sure that you’ve stopped it first:

docker stop my-http-server

Now, remove the container using the rm subcommand:

docker rm my-http-server

Confirm that you’ve properly deleted your old Docker container by running docker ps -a.

Delete your original Docker image from your Docker deployment:

docker rmi my-new-server-image

Check if you’ve properly removed your original Docker image by running docker images.

Learning how to create, manage, and remove Docker containers are just some of what you can do with your Linux server. Explore the deep world of Linux system administration by hosting a server and Docker container hub with XPipe.

Image credit: Shamin Haky via Unsplash. All alterations and screenshots by Ramces Red.