No doubt, our modern life depends on computers, and computers depend on silicon-based processor chips. Computers continue to improve as time goes by due to better processing power.

Moore’s law is the observation that computer chips get faster, are more energy efficient, and cheaper to produce at a predictable rate. About every eighteen months the number of transistors placed on a silicon chip doubles. Each new generation of computer chip has smaller performance boosts than the one before.

Moore’s Law is not a law like Newton’s Three Laws of Motion. Instead, it is an observation of what was happening in the chip-making industry.

Moore’s law will end. There will be a time when we will no longer be able to fit more processors onto a single silicon chip. Silicon chips seem to have reached their peak when it comes to performance and efficiency. When it ends, silicon chips will no longer be able to house additional transistors. However, new computers and technology will require more powerful and agile processors.

While some believe that there can still be Moore’s Law style improvements in speed until at least 2025, there is a risk that Moore’s law will come to an end before a viable replacement is ready, so we need to explore alternatives for silicon-based computing today.

Also read: Quantum Computers Are Here. They’re Complicated and Probably Won’t Make Your Phone Faster

Quantum Computing

Quantum computing uses the power of quantum physics, harnessing the power of subatomic particles. It will deliver currently unimaginable processing power and speed provided by what they call “qubits.”

The main problem with quantum computing right now is that those working with the concept have yet to break past the speed with which a task is already being completed using conventional silicon-based technology. That speed has remained just out of reach.

Also read: Why CPUs Won’t Be Made of Graphene

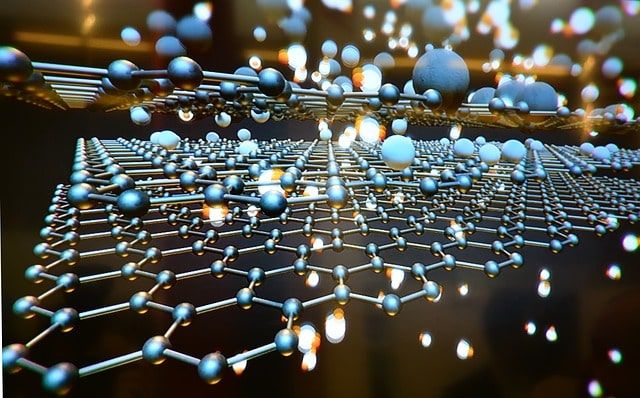

Graphene and Carbon Nanotubes

Graphene is a single layer of carbon atoms that is believed to be the strongest material on earth. It is 200 times stronger than steel yet elastic enough to be stretched another 20% to 25% of its original length. It’s exceptionally lightweight and conducts heat and electricity better than other known materials. Graphene is made of carbon, so it is extremely plentiful, but it may be years until it is available for commercial production.

Graphene cannot be used as a switch. Silicon semiconductors can be turned on and off with an electrical current, but graphene cannot, so using graphene would result in a computer that cannot be turned off.

If graphene can replace silicon chips, we see the possibility of technology like foldable laptops, lightning fast transistors, and cell phones that will not break.

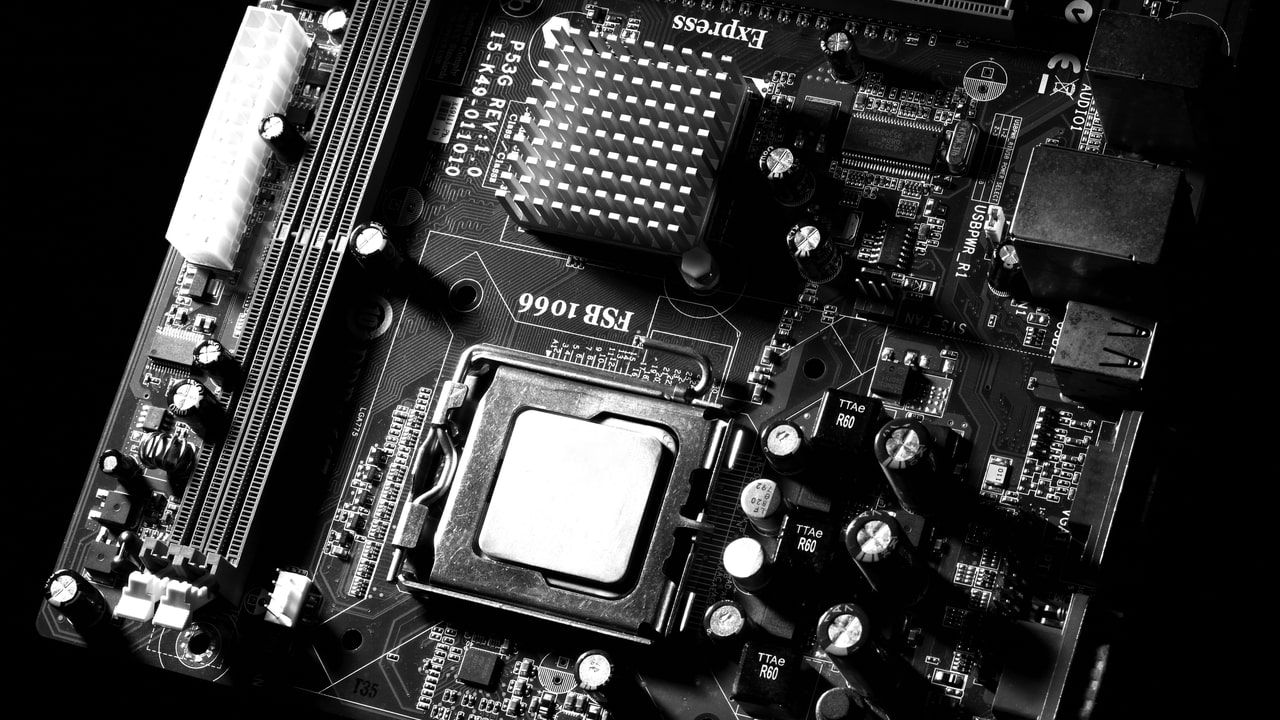

Nanomagnetic logic

NML depends on arrays of nanomagnets. These magnets range in size from a few nanometers to a few hundred nanometers. Nanomagnets work like silicon, but instead, the process relies on the switching of magnetization to create the binary code. It uses dipole to dipole interactions (the interaction between the north and south pole of the magnet) to transmit data, and because it does not require electricity, it needs only a small amount of power to run.

Cold computing

While this is not necessarily a brand new technology, it is a concept that manufacturers see as a way to extend the life of Moore’s Law. By reducing the temperature of the chip, there will be less leakage of current. That cold temperature will reduce the threshold voltage at which transistors switch. Using cold computing may get us an additional four to ten years of scaling in memory performance and power.

Compound semiconductors

Semiconductors created from two or more elements are faster and more efficient than silicon alone. These semiconductors are already available and will soon be finding their way into 5G and 6G phones, giving them more speed, a smaller size, and better battery life.

Atomic

Technology has evolved to the place where we are can manipulate materials down to the atomic level. Chip technology is no exception. IBM has devised a possible way to store data on a single atom. Today it takes 100,000 atoms to store a single 1 or 0.

Atoms are by nature unstable, so for this to be a viable option, more logic for things like error correction will be needed.

Which replacements are most likely?

Compound semiconductors are the only option for silicon-based processors that are viable today. Beyond that, the technology that seems to be the most promising at the moment is the use of nanomagnetic computing. It’s also possible that computers of the future may contain layers of various technologies, each to counteract the disadvantages of the other. But at the moment, no one can accurately predict what the computers of the future will look like.