Building AI is complicated, but understanding it doesn’t have to be. Most existing artificial intelligences are just really good guessing machines (like our brains). You feed in a bunch of data (such as the numbers 1-10) and ask it to make a model (x + 1, starting at 0) and make a prediction. (The next number will be eleven.) There’s no magic, other than what humans do every day: using what we know to make guesses about things we don’t know.

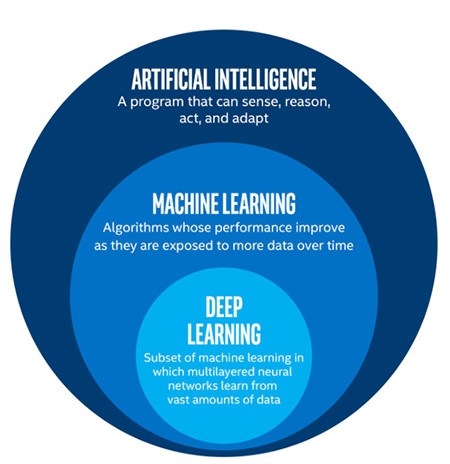

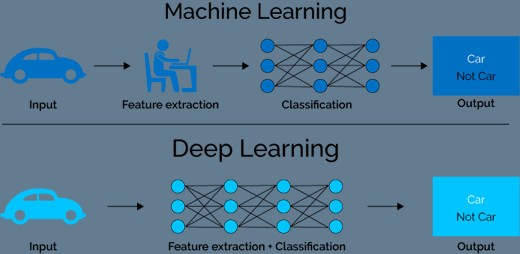

What sets AI apart from other computer programs is that we don’t have to specifically program it for every scenario. We can teach it things (machine learning), and it can teach itself as well (deep learning). While there are multiple varieties of each, they can be broadly defined as follows:

- Artificial Intelligence (AI): a machine that is able to imitate human behavior

- Machine Learning: a subset of AI where people train machines to recognize patterns in data and make predictions

- Deep Learning: a subset of machine learning in which the machine can train itself

Artificial Intelligence

The broadest possible definition of AI is simply that it’s a machine that thinks like a human. It might be as simple as following a logical flowchart, or it could be a nearly-human computer that can learn from a wide variety of sensory inputs and apply that knowledge to new situations. That last part is key – the strong AI that everyone imagines is one that can connect all kinds of learned data points to give it the ability to handle almost any situation.

Right now AI is still on quite a narrow track – Alexa is an amazing butler, but she can’t pass a Turing test. We currently have a limited form of AI, but it’s good to remember that the definition is so broad that eventually it could cover programs that make DeepMind look like a calculator.

Machine Learning

Without machine learning, existing AI would be mostly limited to running through long lists of “if x is true, do y, else, do z.” This innovation, however, gives computers the power to figure things out without being explicitly programmed. As an example of one type of machine learning, let’s say you want a program to be able to identify cats in pictures:

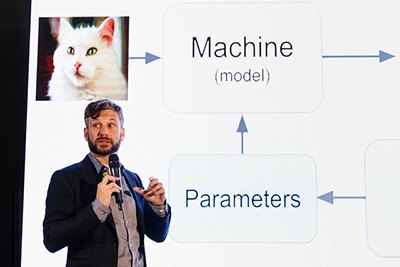

- Give your AI a set of cat characteristics to search for – individual lines, bigger shapes, color patterns, etc.

- Run some pictures through the AI – some or all may be labeled “cat” so the machine can more efficiently pick out relevant cat features.

- After the program has seen enough cats, it should know how to find one in a picture – “If picture contains Feature X, Y, and/or Z, it’s 95% likely to have a cat.”

As complicated as machine learning sounds, it can be boiled down to the following: “Humans tell computers what to look for, and computers refine those criteria until they have a model.” It’s fairly simple, extremely useful, and it filters your spam, recommends your next Netflix shows, and tweaks your Facebook feed. Try Google’s Teachable Machine for a quick hands-on demonstration!

Deep Learning

As of 2018, this is the cutting edge of AI. Think of it as machine learning with deep “neural networks” which process data in somewhat the same way as a human brain. The key difference from its predecessor is that humans don’t have to teach a deep learning program what cats look like. Just give it enough pictures of cats, and it’ll figure that out on its own:

- Input a lot of cat photos.

- The algorithm will inspect the photos to see what features they have in common (hint: it’s cats).

- Each photo will be deconstructed into multiple levels of detail, from big, general shapes to tiny, little lines. If a shape or line repeats itself a lot, the algorithm will label it as being an important characteristic.

- After analyzing enough pictures, the algorithm now knows which patterns provide the strongest evidence of cats, and all humans had to do was provide the raw data.

To summarize: deep learning is machine learning where the machine trains itself, though it’s way beyond just cats — neural networks are now capable of accurately describing everything in a picture.

Deep learning requires a lot more initial data and computing power than machine learning, but it’s beginning to be deployed by companies from Facebook to Amazon. The most infamous manifestation of machine learning, though, is AlphaGo, a computer that played games of Go against itself until it could accurately predict the best moves well enough to repeatedly beat several world champions.

Conclusion: AI = Apocalyptic Intelligence?

Hollywood is responsible for a lot of bad science, but when it comes to AI, truth and fiction potentially aren’t that far apart. It’s not inconceivable that a robot could take over a space station (2001: A Space Odyssey), make you fall in love (Her), or behave exactly like a human (Blade Runner, Ex Machina).

That doesn’t make it a bad bet, though. AI could accelerate human progress faster than almost anything before it. And, though it may seem cynical, the reality is that if responsible scientists stay away from AI because of its potential to go wrong, it will probably be developed anyway by people with fewer safety concerns. We’ve taken computers from checkers to Go, and the next few steps could take humanity to some interesting places.