Facebook could use some good PR after the latest news that revealed the social media company allowed millions of customers’ data to be stolen. To right the ship a little, Facebook has created an open-source dataset that it believes will lessen the AI bias.

Also read: Facebook Data Leaked from Over 500 Million Users

Facebook Aim to Fix AI Bias

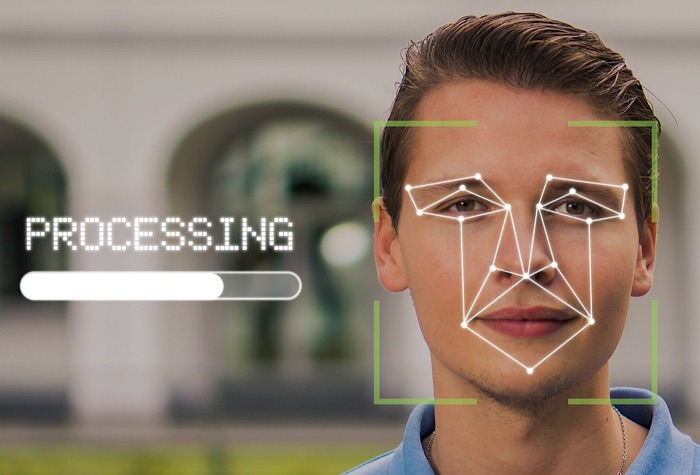

An existing problem with facial recognition has been the AI bias. While artificial intelligence tries to identify people through their unique facial features, it historically doesn’t do well with non-male, non-white individuals.

Facebook has set out to fix the AI bias with its open-source dataset it’s calling “Casual Conversations.” It includes 45,186 videos of more than 3,000 people having a non-scripted conversation. The participants are of different genders, age groups, and skin tones.

Actors were paid to submit videos that included their own descriptions of age and gender to remove as much AI basis as possible. The Facebook team then labeled them by skin tone based on the Fitzpatrick scale that examines six skin tones.

Lighting was noted as well to show different skin tones in low-light situations. Audio and visual AI can be tested with the Casual Conversations dataset. The purpose isn’t to develop algorithms but evaluate the performance of the algorithms with different faces.

Two of the currently used datasets for facial recognition – UB-A and Adience – were composed mostly of white-skinned people. UB-A used 79.6 percent white people, while Adience used 86.2 percent.

Other than skin tone, the classifiers for IBM, Microsoft, and Face++ performed better with male faces than female voices in an MIT study. There were nearly no mistakes with white male faces, while darker female faces had an error rate of nearly 35 percent.

Casual Conversations aims to help evaluate the currently used algorithm. “Our new Casual Conversations dataset should be used as a supplementary tool for measuring the fairness of computer vision and audio models, in addition to accuracy tests, for communities represented in the dataset,” said Facebook’s team working on the project.

Casual Conversations Evaluations

Facebook used Casual Conversations to test the five algorithms that had won the Deepfake Detection Challenge in 2020. This had been developed to identify doctored media that was being posted.

Despite being well-respected algorithms, they struggled with darker skin tones. The third-place winner in the challenge actually faired the best with Casual Conversations.

Facebook has already released the dataset to the open-source community. In doing so, it did note that it identifies genders of “male,” “female,” and “other,” explaining that it can’t identify those who identify as nonbinary.

“Over the next year or so, we’ll explore pathways to expand this dataset to be even more inclusive, with representations that include a wider range of gender identities, ages, geographical locations, activities, and other characteristics,” said Facebook of its efforts to eliminate the AI bias.

Read on to learn about Microsoft’s efforts to have facial recognition regulated to eliminate basis.