Machines learning things aren’t a new thing at all. Type some instructions into a batch file, and you can instruct your computer to do just about anything with the programs you run. Get a webcam and facial recognition software and you can clearly see that your computer is capable of recognizing your face. However, all of the things described here are not results of the computer’s “thoughts.” At best, today’s average home computer can emulate thinking. But there are people out there in teams around the world developing ways to reproduce human thinking in machines, even combining the best of both worlds, to create a new form of learning that mimicks the intuitive way in which we capture the world around us.

Although many of us are afraid of the implications of artificial intelligence, there’s no doubt that everyone holds it in reverence as the pinnacle of the evolution of the machine. How far have we come in our pursuit to create machines that can come close to human intuition and abstract thought? We’re going to have a look at what the Google Brain team is doing and how artificial neural networks could influence the way that technology interacts with us on a daily basis in the near future.

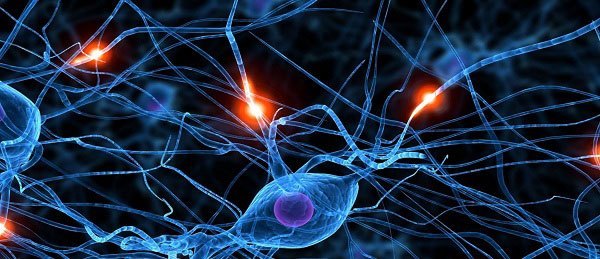

What Is An Artificial Neural Network?

An artificial neural network, put simply, is a system that uses an algorithm which is inspired by the way humans learn things. At present, personal computers are machines of habit. They will rigorously follow one single line until they reach the end of it regardless of whether the results make sense. For example, a computer system that analyzes consumer behavior on a website could show that a large number of visitors click on a link at the top right corner of every page, but it cannot explain why it happens. It cannot adapt its methods to dig deeper and extrapolate meaning from the raw data it’s churning through.

A “perfect” artificial neural network will be able to adapt the way it processes information to fit the data it’s confronted with. This is especially useful with audiovisual processing where rule-based programming is very inefficient. While an American will have little trouble understanding an Australian accent in very little time, computers may have much more trouble doing the same task. Artificial neural networks are designed in such a way that a computer may be able to interpret differences in how Australians speak in the same way we do – by picking up the fluctuations in tone and pronunciation, building a context, and filling in any gaps with other information conveyed in the sentence. Doing this with simple programming is much harder than it seems.

What’s Google Brain?

Google Brain is a project that focuses on large-scale deep learning. The project involves a colossal amount of machinery, with 16,000 of the CPU cores in their data centers all working in unison to create a machine that can effectively “learn” and “understand” things. The above image is actually a “drawing” that the network made. It didn’t “copy” the design from anywhere; it simply constructed it abstractly like any painter would.

One of the most notable accomplishments in this project is the network’s ability to detect cats. Modern-day computers can easily display a video with a cat for your entertainment, but they can’t understand what they are showing you. No one expects their computers to know what a cat is. Yet they show videos of these fuzzy little creatures millions of times per day around the world, completely ignorant of their existence. The computer you’re reading this from is probably no more than a glorified interactive television. Google managed to create a system that could point out the cat in a still image (with no previous instruction as to what a cat is). This is an unparalleled accomplishment that could take us all a step further in the information age.

Applications for Neural Networks

Imagine having a robot with you that can not only drive you to work but can also serve as a medic when you’re injured. Just the simple fact that a computer can distinguish what a cat is when it is surrounded by other objects has major implications. You may have to wait a while (16,000 CPU cores is very difficult at this moment to fit in a small space), but distinguishing a wound from the skin surrounding it (and identifying the type of wound) means that a “medical module” on a robot could help it make sutures on your body. Once you take a bit of time to think about it, artificial neural networks could lead to feats of technology the likes of which we haven’t thought we’d see in our lifetimes. Perhaps one day not too far from now we’ll be taking robots along as biking buddies and playing football with them, all thanks to the way in which they can adapt and learn just like us.

What do you think? Is it overly optimistic to think that we can go from “cat detector” to “robot doctor” at some point in our lives? Tell us below in a comment!