If you’ve ever had to compress large volumes with tar, you’ll know how much of a pain it can be. It often goes very slowly, and you find yourself hitting Ctrl + C to end the task and just forget about it. However, there are some other tools that tar can use, and they’re a great way to make use of today’s heavily multi-threaded CPUs and speed up your tar archiving. This article shows you how to make tar use all cores when compressing archives in Linux.

Understanding and Installing the Tools

The three main tools in question here are pigz, pbzip2, and pxz. There are some subtle differences between the tools, but the differences lie between gzip, bzip2, and xz. In that respective order, the compression levels increase, meaning that an archive compressed with gzip will be larger than one compressed with xz, but gzip will naturally take less time than xz will. bzip2 is somewhere in the middle.

The “p” that starts the names of each of the tools means “parallel.” Parallelization is something that has become increasingly more relevant over the years – how well something spans all CPU cores. With CPUs like AMD’s Epyc and Threadripper lines that can reach 64 cores and 128 threads, it’s important to understand what applications can make use of that. These compression functions are prime candidates.

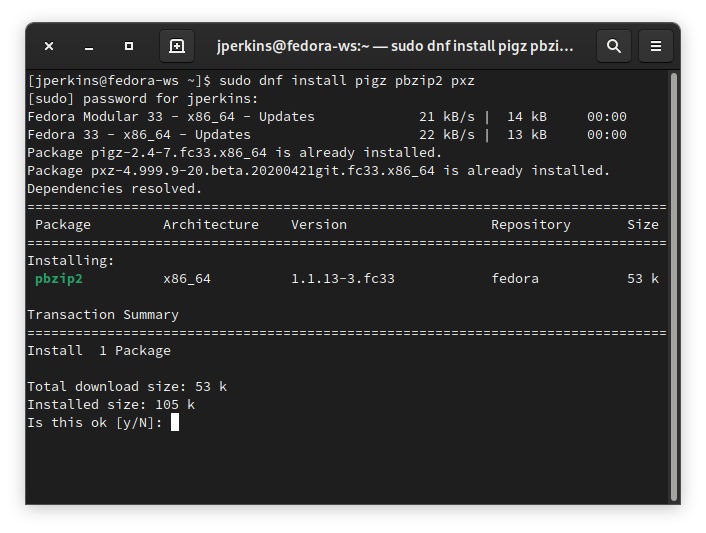

To install the tools, you can just turn to your repos.

sudo apt install pigz pbzip2 pxz # Debian/Ubuntu sudo dnf install pigz pbzip2 pxz # Fedora sudo pacman -Sy pigz pbzip2 pxz # Arch Linux

This article focuses on pxz for the sake of consistency. You can check out this tutorial for pigz.

Compressing Archives with Tar

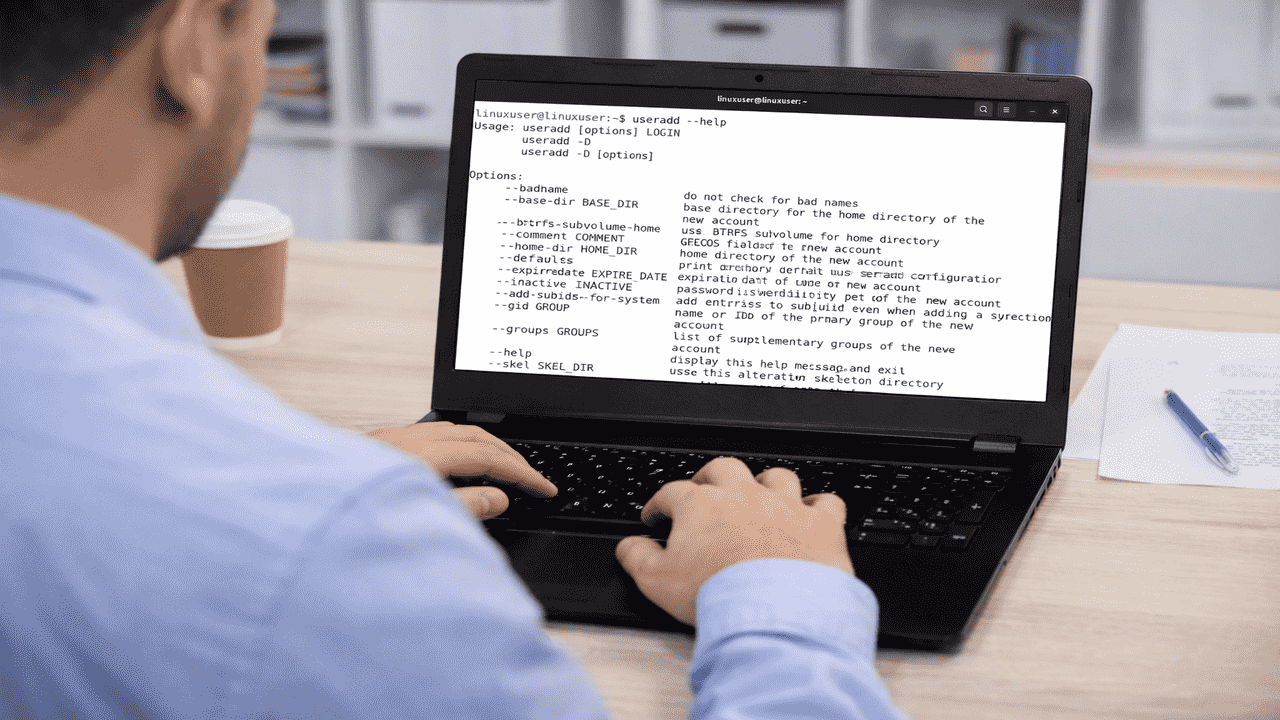

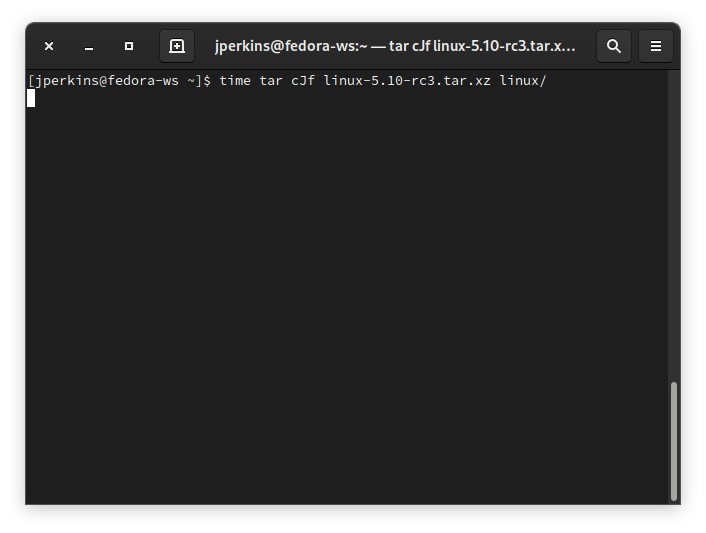

The syntax of tar is fairly simple. To just compress a directory, you can use a command like this:

tar czf linux-5.10-rc3.tar.gz linux/ tar cjf linux-5.10-rc3.tar.bz2 linux/ tar cJf linux-5.10-rc3.tar.xz linux/

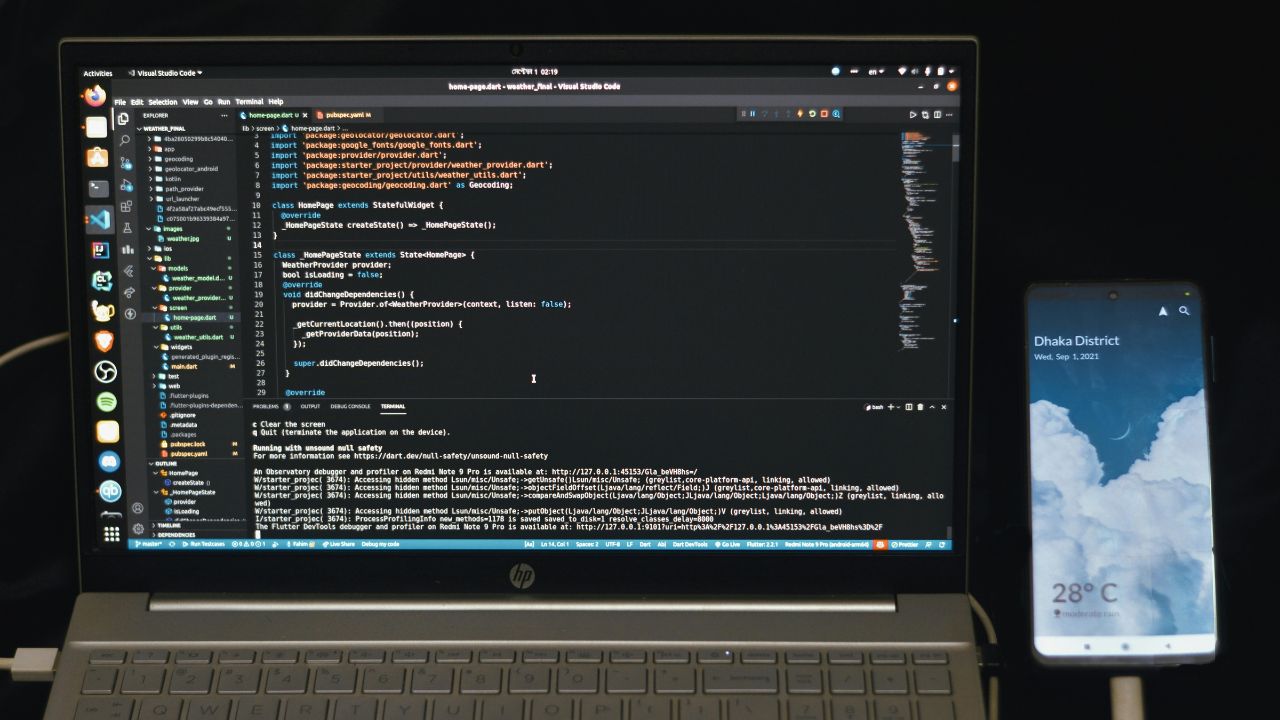

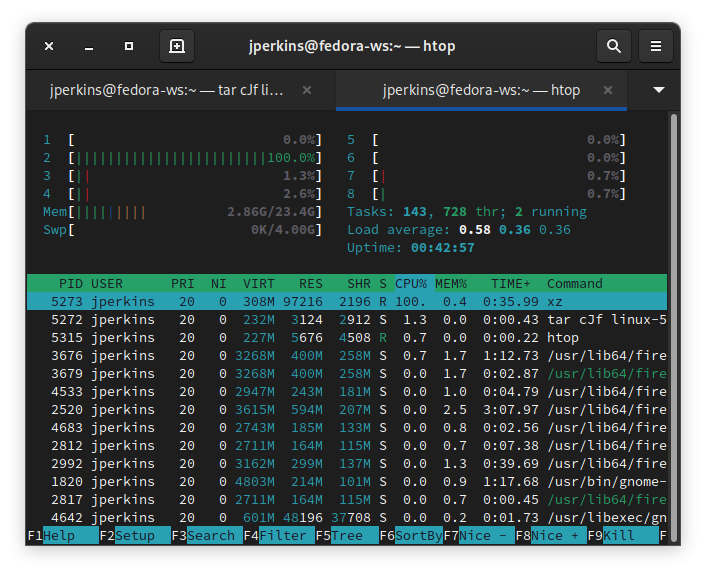

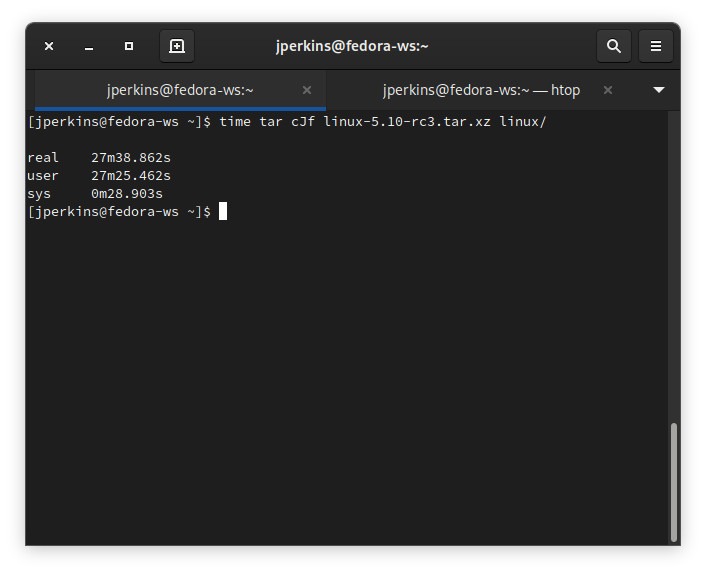

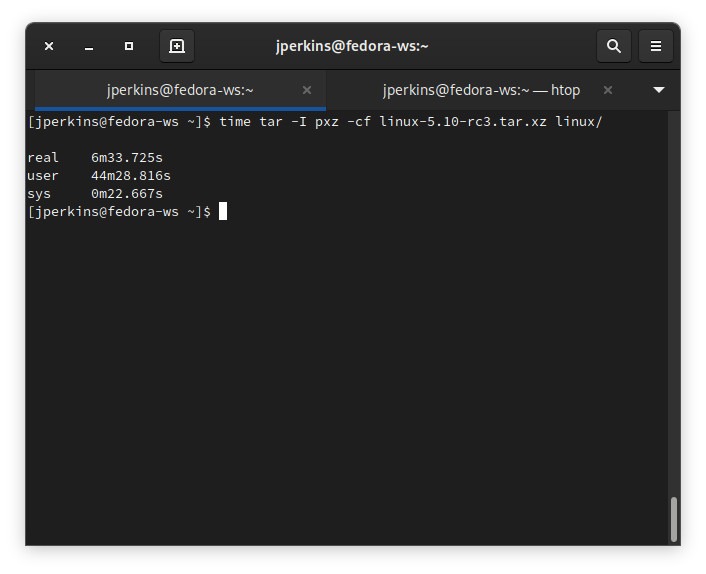

The first will use gzip, the second will use bzip2, and the third will use xz. The filename and the directory will vary depending on what you’re doing, but I pulled the Linux Kernel from GitHub into my “/home” directory, and I’ll be using that. So, I’ll go ahead and start that command with the time command at the front to see how long it takes. You can also see that xz is listed as taking the highest percentage of my CPU on this system, but it’s only pinning one core at 100 percent.

And, as you can see, it took a very long time for my aging i7-2600s to compress Linux 5.10-rc3 (around 28 minutes).

This is where these parallel compression tools come in handy. If you’re compressing a large file and looking to get it done faster, I can’t recommend these tools enough.

Using Parallel Compression Tools with Tar

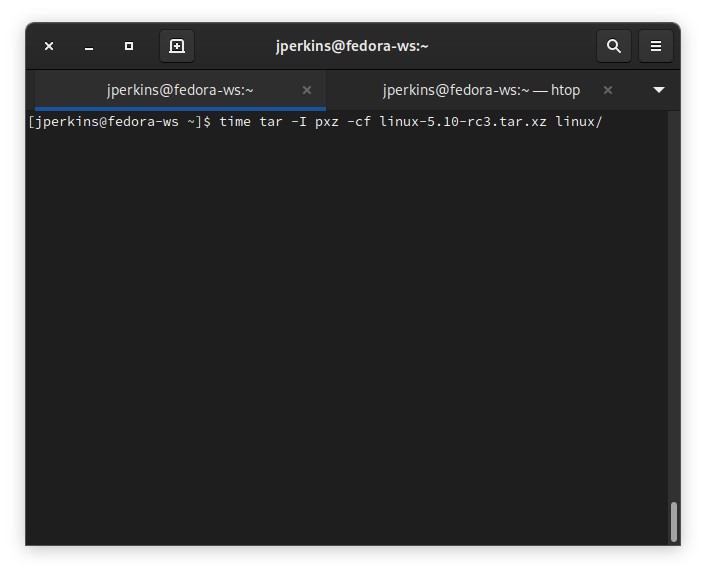

You can either tell tar to use a compression program with the --use-compression-program option, or you can use a little bit simpler command flag of -I. An example of the syntax for any of these tools would be like this:

tar -I pigz -cf linux-5.10-rc3.tar.gz linux/ tar -I pbzip2 -cf linux-5.10-rc3.tar.bz2 linux/ tar -I pxz -cf linux-5.10-rc3.tar.xz linux/

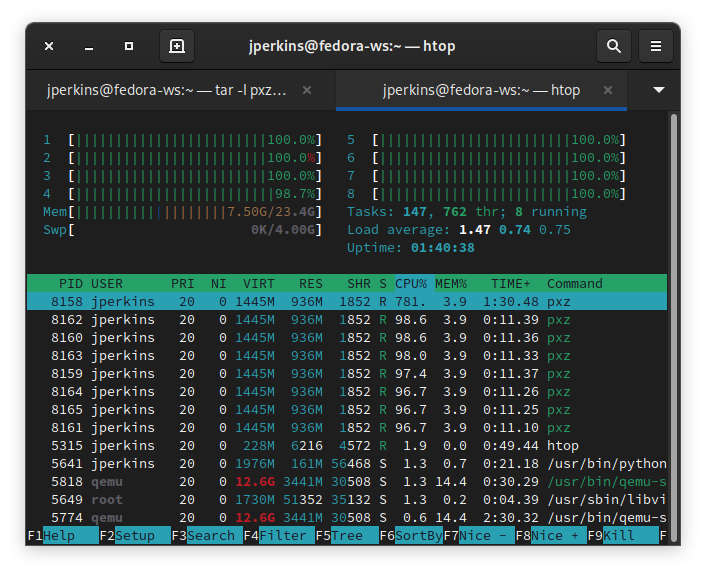

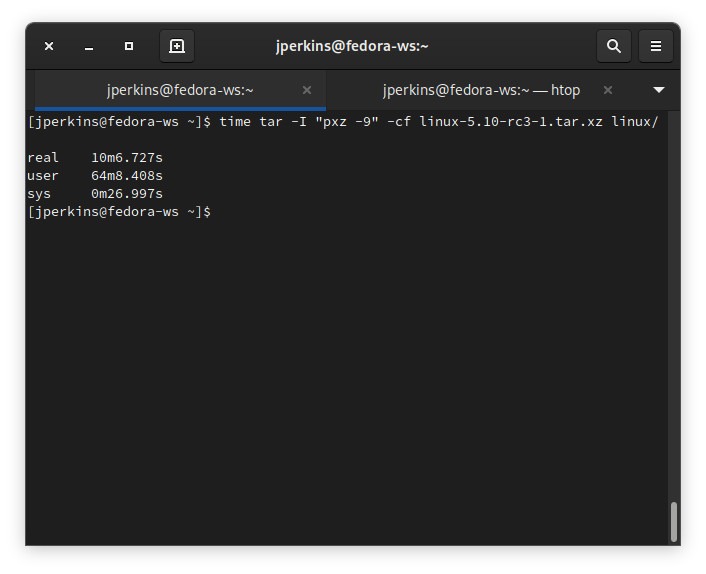

Let’s test it out and see how long it takes my system to compress the Linux Kernel with access to all eight threads of my CPU. You can see my htop readout showing all threads pinned at 100 percent usage because of pxz.

You can see that it took substantially less time to compress that archive (about seven minutes!), and that was with multitasking. I have a virtual machine running in the background, and I’m doing some web browsing at the moment. The Linux Kernel hardware scheduler will give you what you need for your personal stuff, so if you left your pxz command to run without any other stuff running on your system, you may be able to get it done faster.

Adjusting Compression Levels with pigz, pbzip2, and pxz

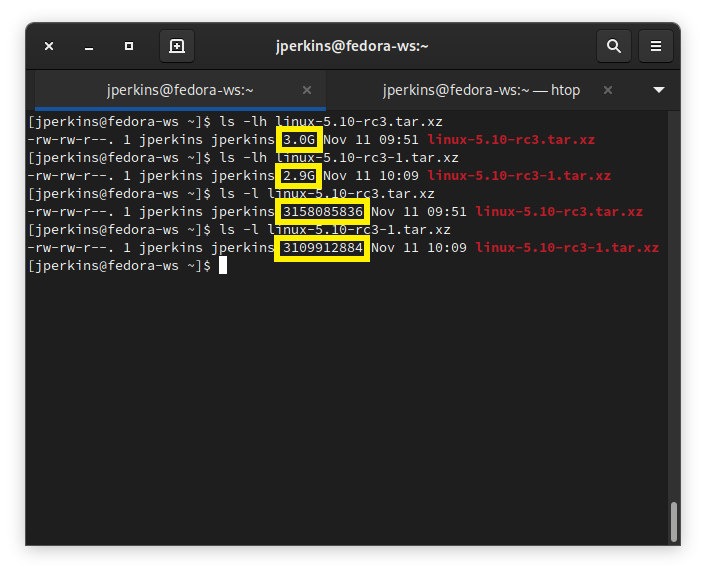

You can also pass compression levels to pxz to make the file even smaller. This will require more RAM, CPU, and time, but it’s worth it if you really need to get a small file. Here’s a comparison of the two commands and their results side by side.

The compression isn’t that much greater, and the time isn’t necessarily worth it, but if every megabyte counts, it’s still a great option.

I hope you enjoyed this guide to using all cores to compress archives using tar. Make sure to check out some of our other Linux content, like how to build a new PC for Linux, mastering Apt and becoming an Apt guru, and how to install Arch Linux on a Raspberry Pi.